Read the full article on DataCamp: How to Set Up and Run DeepSeek-R1 Locally With Ollama

Learn how to install, set up, and run DeepSeek-R1 locally with Ollama and build a simple Retrieval-Augmented Generation (RAG) application.

Overview

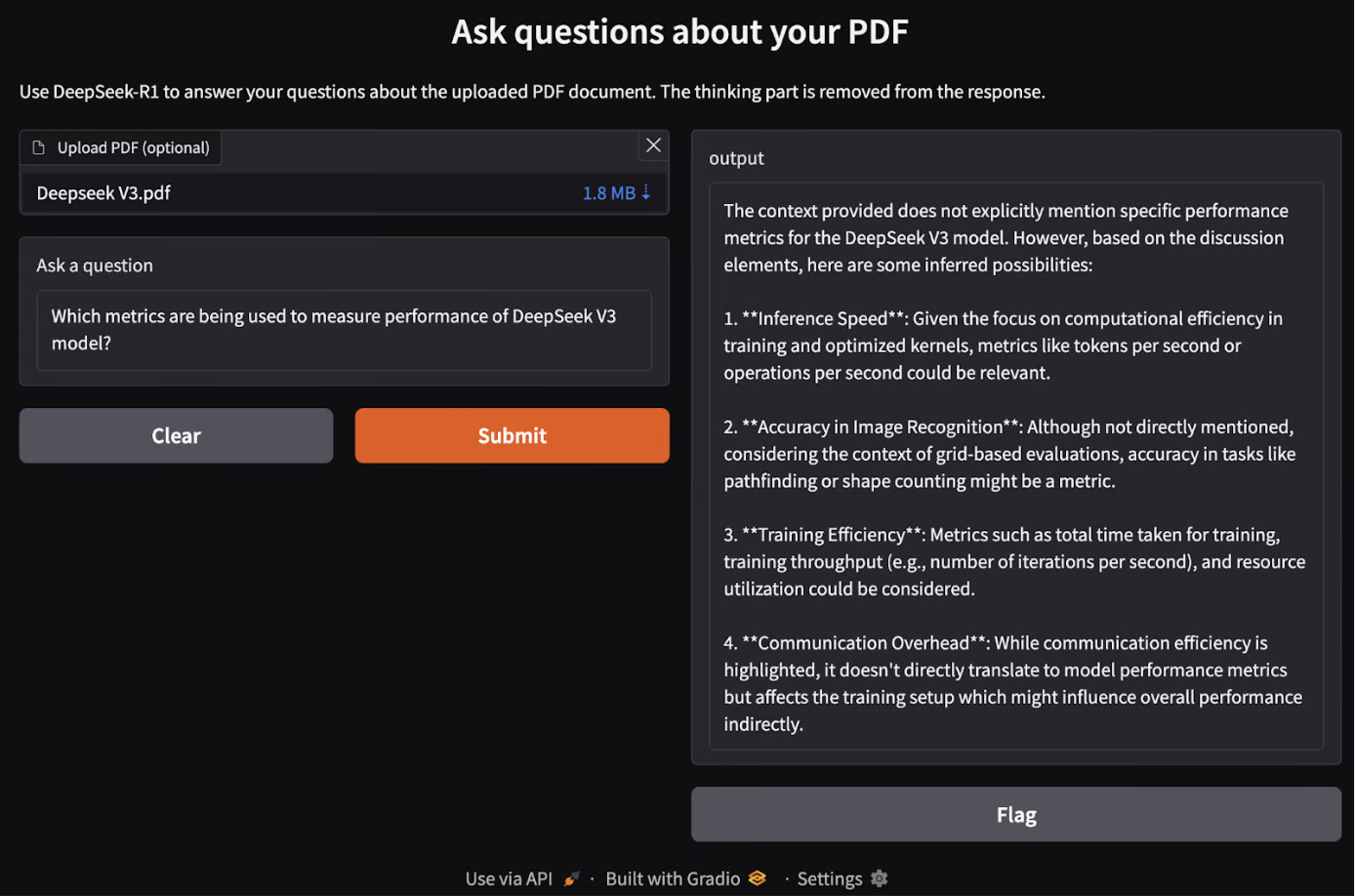

In this tutorial, you’ll learn step-by-step how to run DeepSeek-R1 locally and set it up using Ollama. We’ll also explore building a simple RAG application that runs on your laptop using the R1 model, LangChain, and Gradio.

If you only want an overview of the R1 model, check out this DeepSeek-R1 article. To learn how to fine-tune R1, refer to this tutorial on fine-tuning DeepSeek-R1.

Why Run DeepSeek-R1 Locally?

Running DeepSeek-R1 locally offers several advantages:

- Privacy & Security: No data leaves your system.

- Uninterrupted Access: Avoid rate limits, downtime, or service disruptions.

- Performance: Get faster responses with local inference, avoiding API latency.

- Customization: Modify parameters, fine-tune prompts, and integrate the model into local applications.

- Cost Efficiency: Eliminate API fees by running the model locally.

- Offline Availability: Work without an internet connection once the model is downloaded.

Learn more by reading the full guide on DataCamp. Click here to read the full article..

Comments